Web App

Backdraft AI

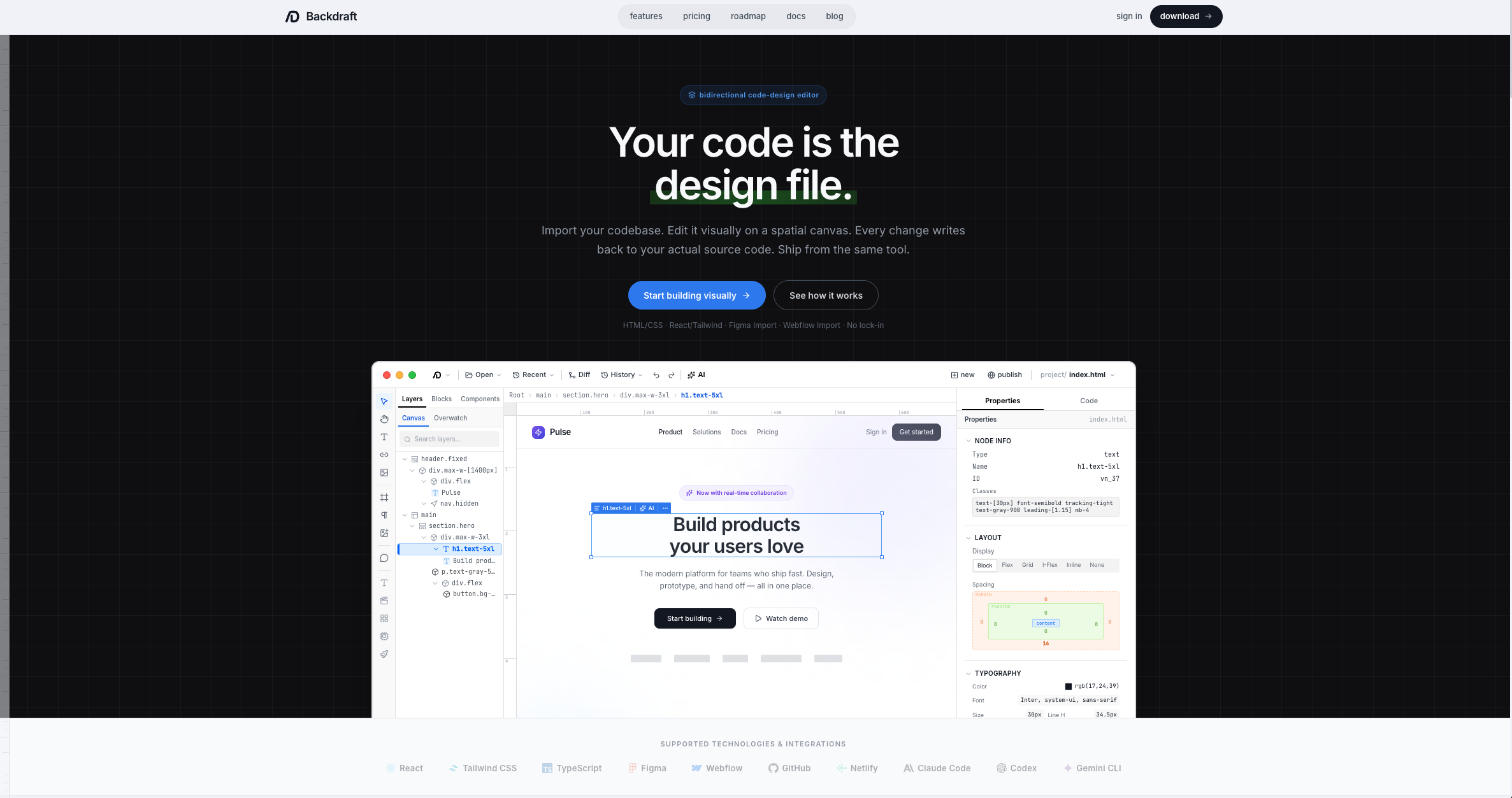

Bidirectional code-to-design editor. Import your codebase, visually edit it on a spatial canvas, and every change writes back to your source code.

Web App

Bidirectional code-to-design editor. Import your codebase, visually edit it on a spatial canvas, and every change writes back to your source code.

Backdraft started as a folder-watching script that I used to evaluate AI models. I'd drop screenshots of websites I liked into a folder, and the script would extract a design system from the image, then generate a complete landing page in HTML and CSS. When a new model came out, I'd swap it in and run the pipeline end-to-end. The output told me everything about the model's design capabilities in a single pass.

I refined this workflow for over two years, building a design system extraction process that could capture not just colors and fonts, but the full structural composition of a page — spacing patterns, component hierarchy, aesthetic feel — with enough accuracy to recreate websites using smaller models in one shot.

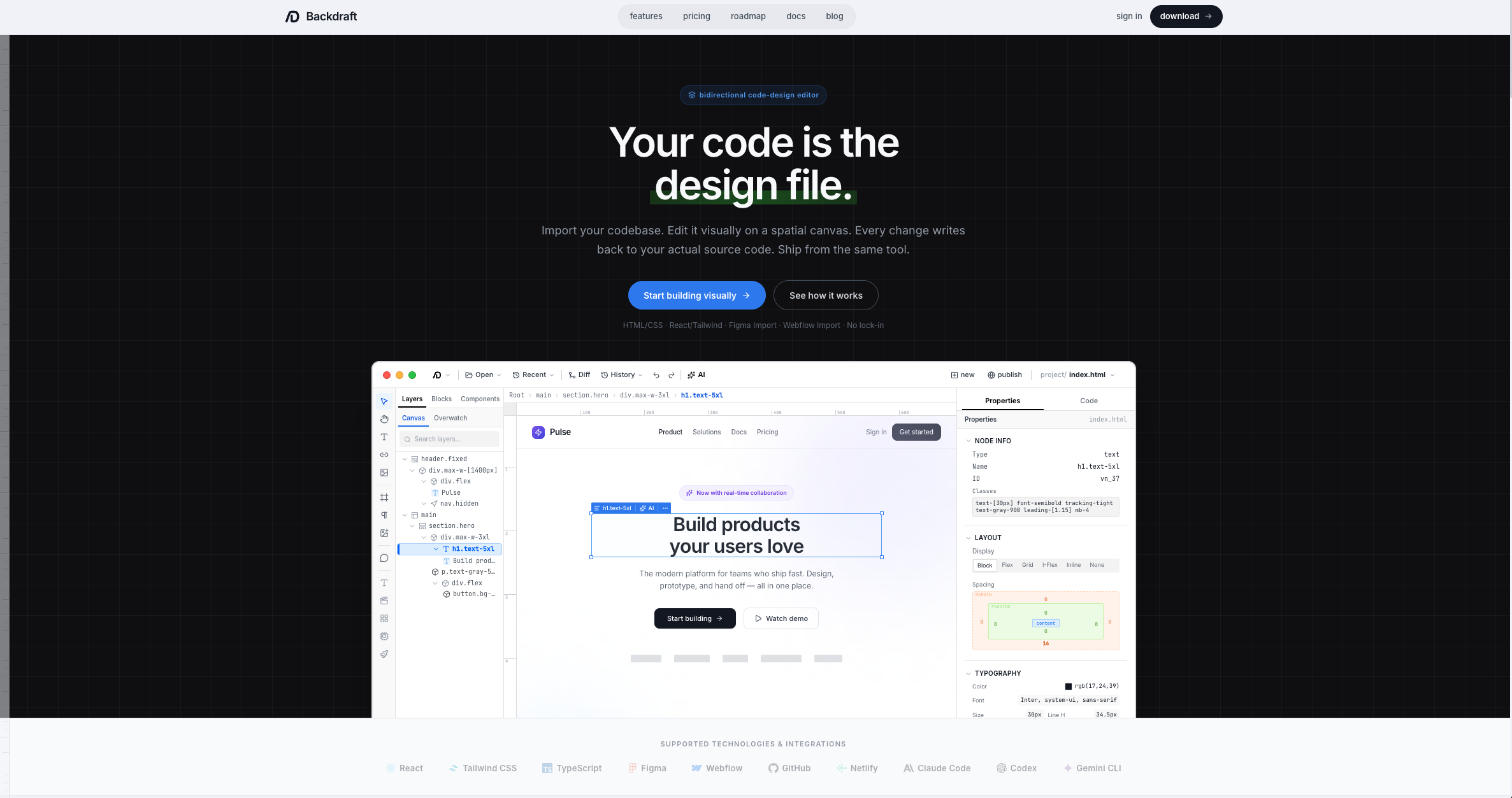

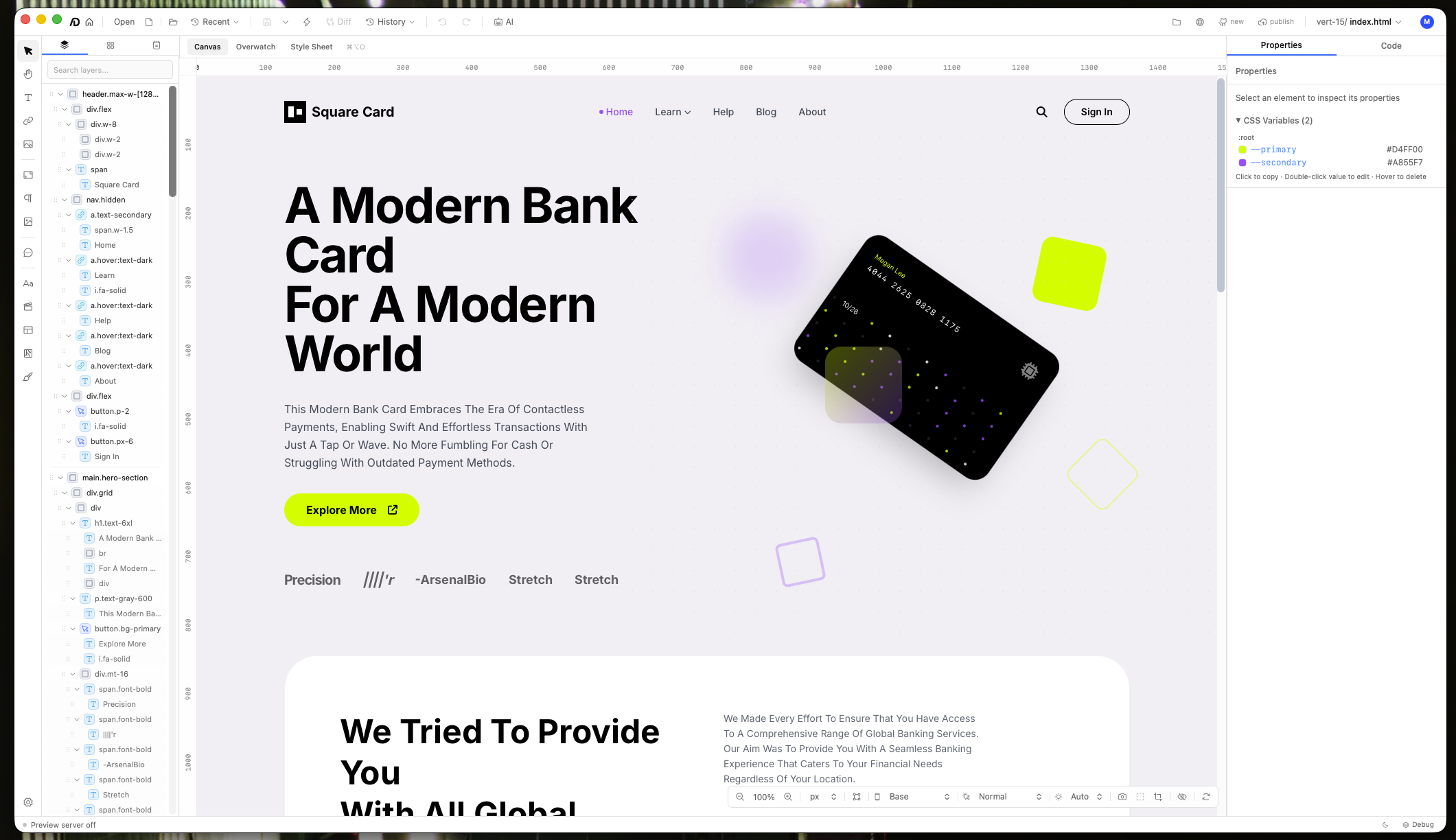

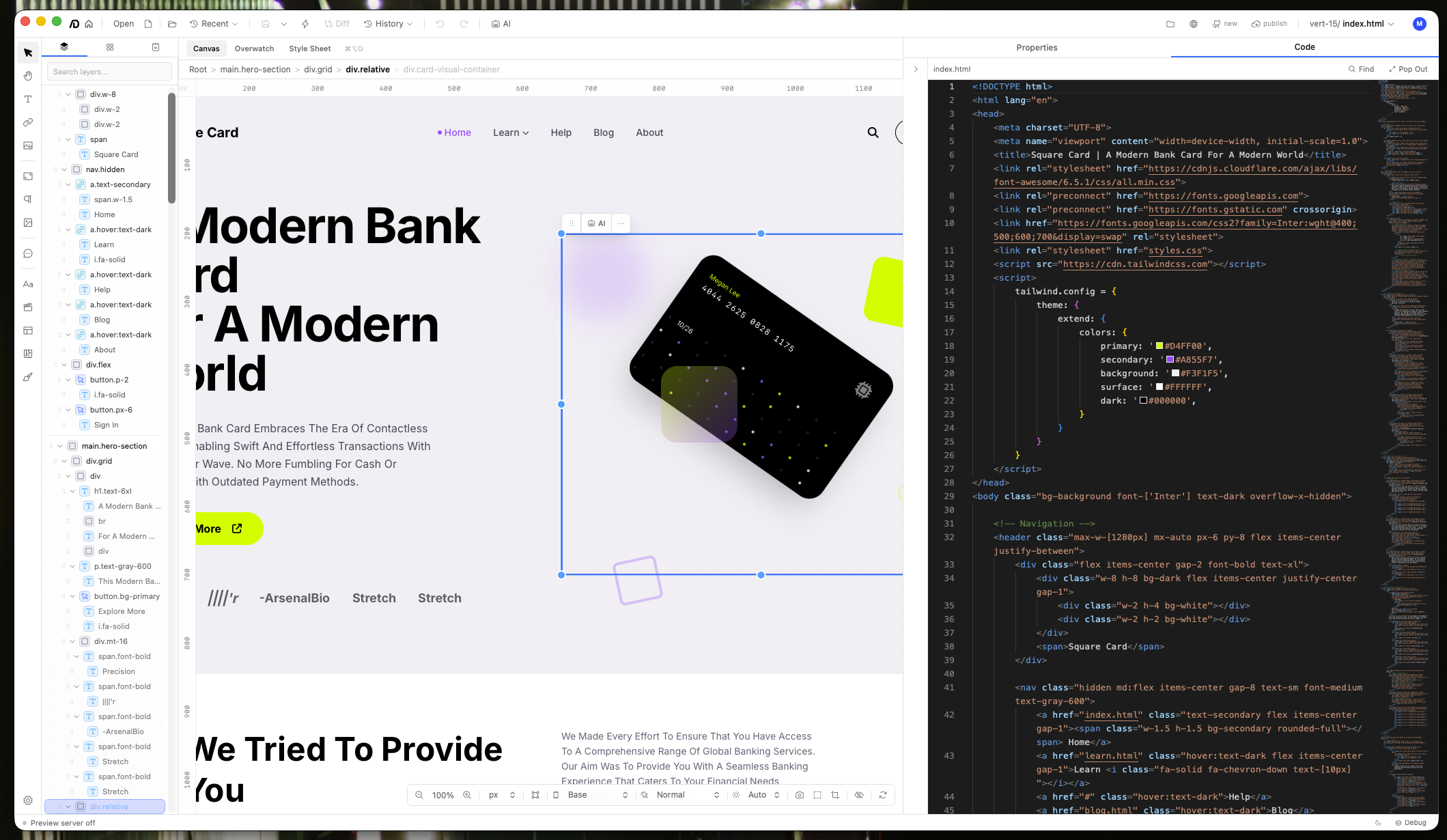

The editor canvas with a live project, showing the layer tree, visual canvas, and code panel.

The moment I decided to build a real product was when I saw other teams executing similar ideas. I'd been solving the hardest part of the problem for two years already. The tool just needed a proper interface.

The editor has a system layer between your raw source code and the visual canvas. It interprets and renders your actual files, then converts visual edits back to true code — your code, with your formatting and naming conventions intact.

Visual edits sync back to the source code in real time through the bidirectional layer.

The hardest part was the adapter system. There are countless ways to write the same thing in code, and the tool has to read, parse, and make rendering decisions on every line across every supported language and framework.

The AI agent mirrors a real design workflow: design system extraction, image sourcing through Unsplash, and a multi-model strategy. Gemini for initial creative generation (best front-end design quality), then Claude Code or Codex for sustained editing (better consistency over long sessions). The design system acts as connective tissue, keeping the aesthetic coherent regardless of which model is working at any given moment.

A system layer between raw code and the canvas translates in both directions across a dynamic library of languages and frameworks.

Two years of refined prompts that capture structure, aesthetics, and components from any mockup with production-quality accuracy.

Gemini for initial design, Claude Code and Codex for sustained editing. The right model for each phase of the workflow.

Every page rendered at desktop, tablet, and mobile simultaneously. Spot responsive issues across the entire site at once.

Want the full story?

Read Case Study →