iOS App

Fitted AI

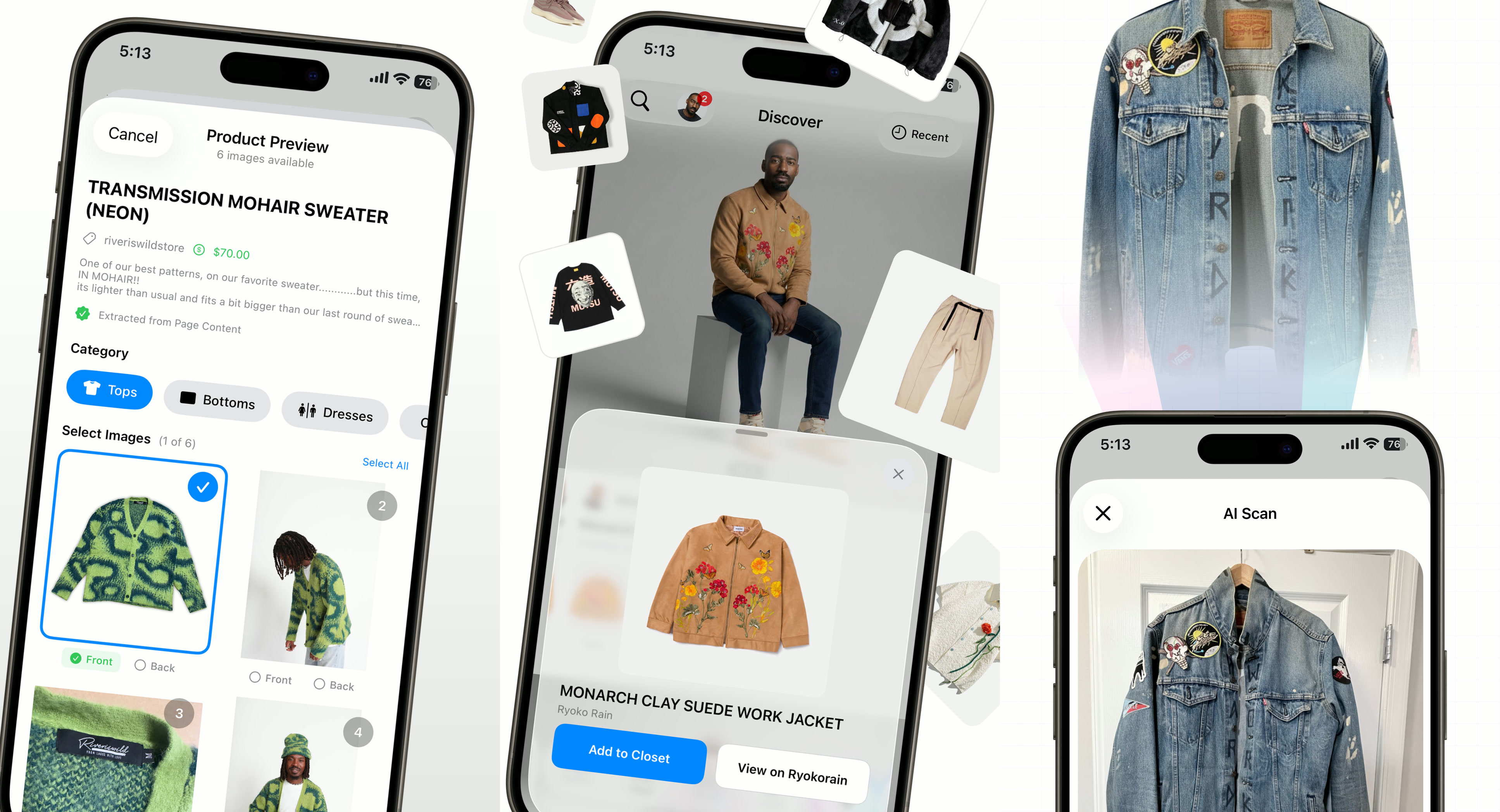

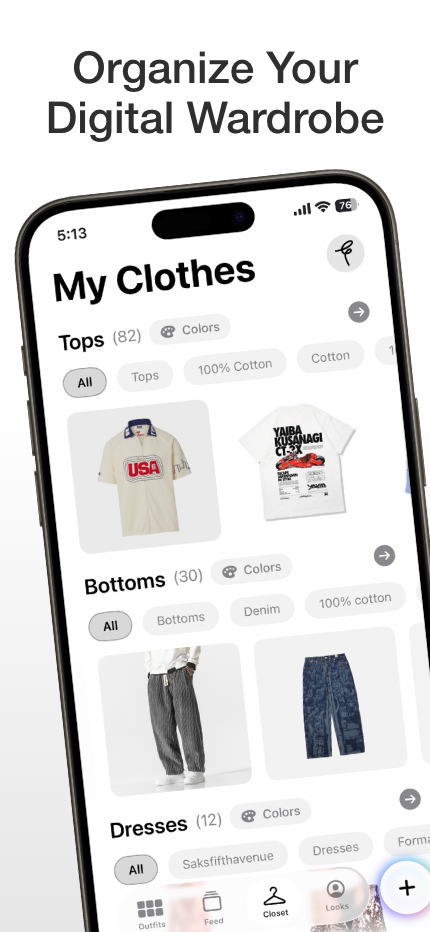

AI outfit visualization app. Upload a photo, describe an outfit, and see yourself wearing it. Published on the App Store with in-app purchases.

iOS App

AI outfit visualization app. Upload a photo, describe an outfit, and see yourself wearing it. Published on the App Store with in-app purchases.

Fitted started as a CLI experiment when Google dropped their Nano Banana model. I built a tool that took a headshot, a medium shot, and some clothing items, then tried to get the model to swap the clothing as a virtual try-on. The first attempts were bad — hallucinated features, distorted proportions, collage-like outputs. But through iterating on the prompt structure and input format, I discovered the model needed specific conditions: multiple processing steps, reference images in a particular order, and a minimum number of inputs.

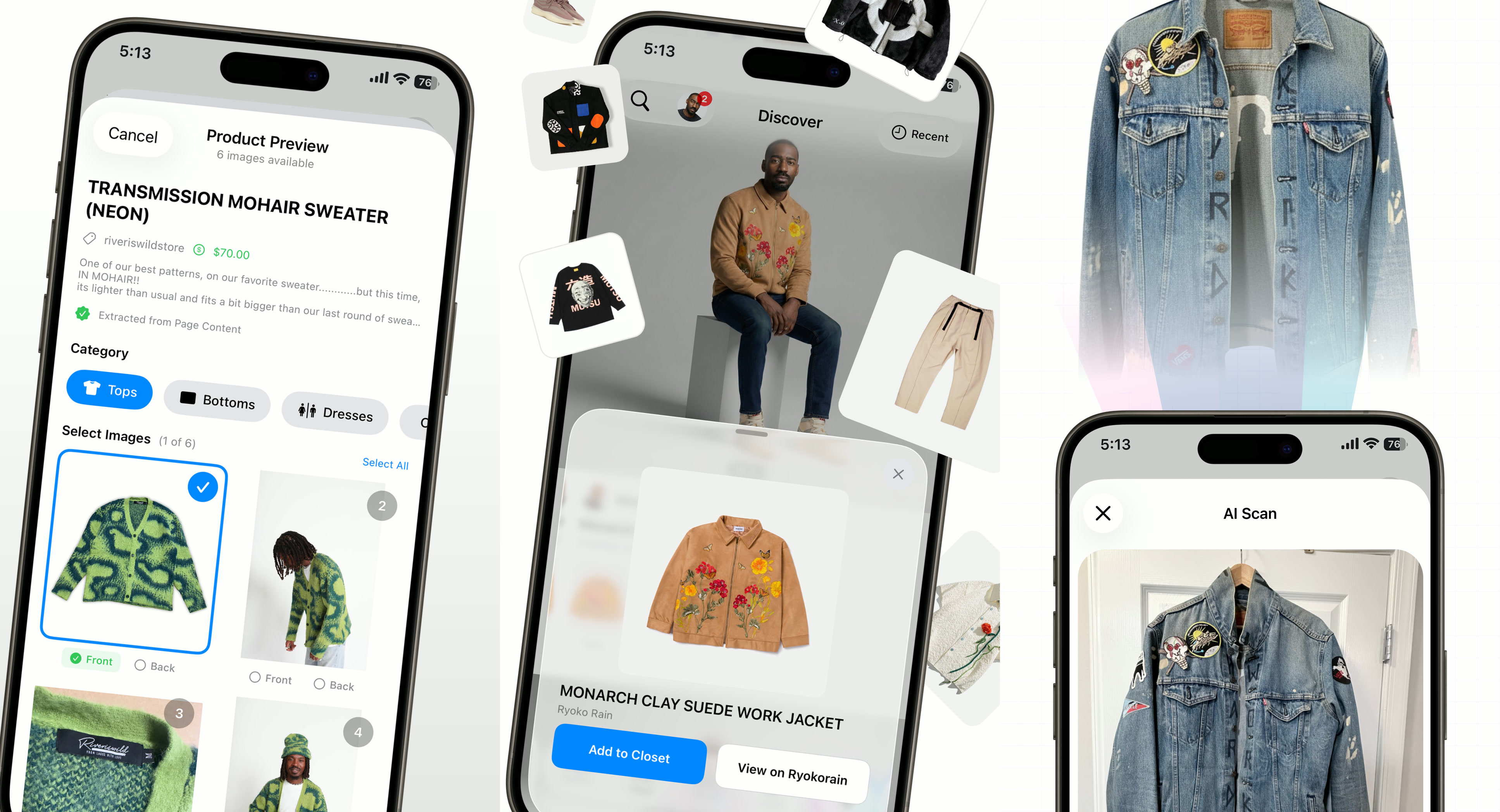

The core experience: upload your photo, pick an outfit, see yourself wearing it.

The technical stack evolved from Python scripts to a full FastAPI backend with SQL databases, Redis for caching, and Celery workers handling async image processing. A single outfit generation takes 30-60 seconds, so synchronous processing was never an option.

The core challenge is character consistency. People are extraordinarily sensitive to their own likeness, and any drift in facial features triggers the uncanny valley effect immediately. I reduced the required input from six images to four by building an avatar grid preprocessor — a composite showing the subject from multiple angles in a single image, freeing up API slots for clothing items.

The generation runs in four phases: subject establishment, outfit composition, clothing compositing, and quality checking. This sequenced approach is what produces usable outputs. Single-shot attempts produce gibberish and burn API credits.

After four submissions to Apple — navigating in-app purchase requirements, social feed content policy, and user safety standards — I was approved with in-app purchases and authorized to do business in both the U.S. and the U.K.

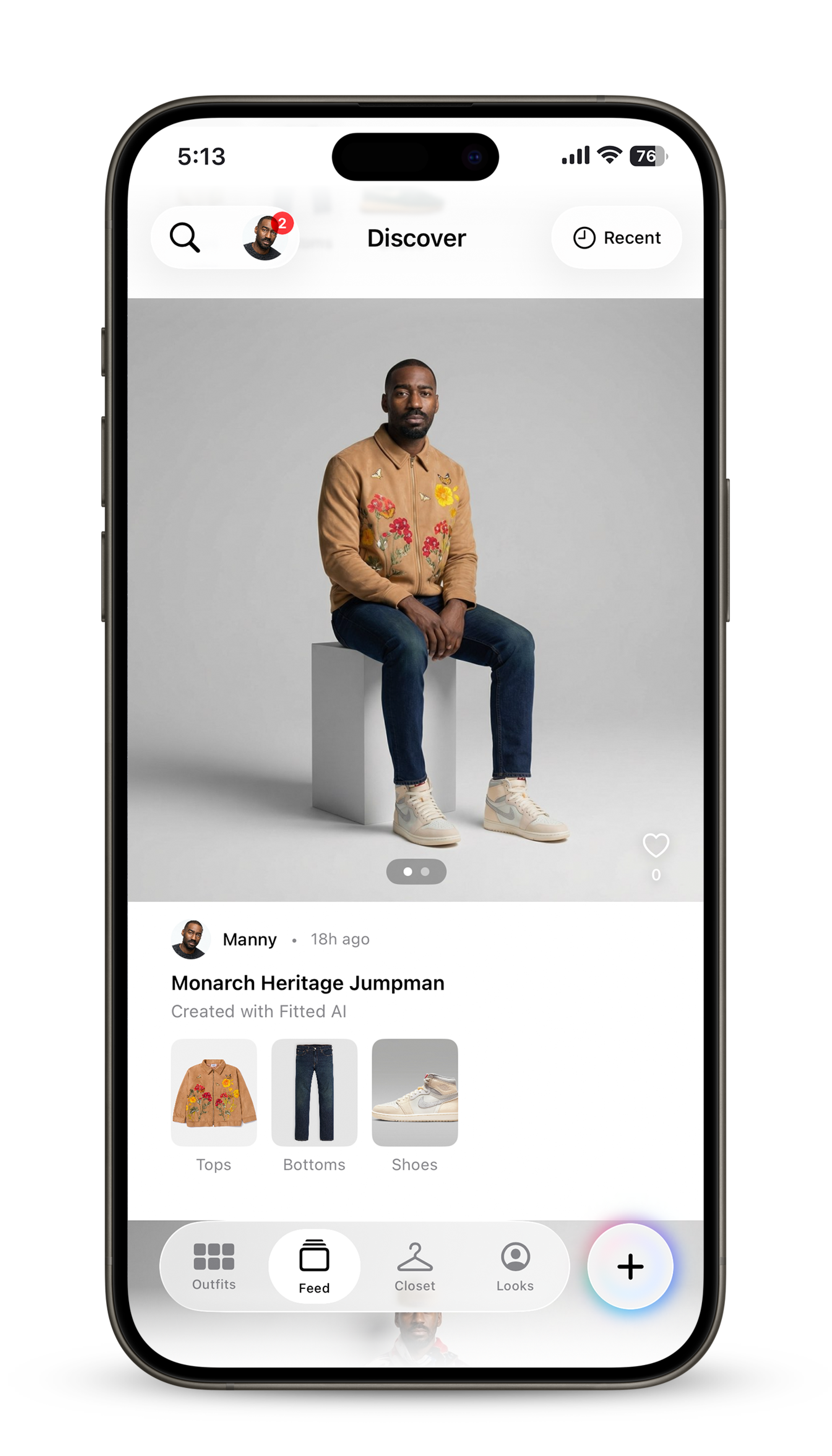

The social discovery feed where users browse and share outfit visualizations.

Over the course of the project I created 128 documentation files — architecture plans, API specifications, workflow diagrams, prompt libraries, debugging logs, and feature roadmaps. These documents served a dual purpose: they were my own reference for maintaining direction across a complex project, and they were context files that my LLMs could reference during coding sessions.

Social ad for the Fitted AI app launch.

Four-stage workflow with avatar grids, phased outfit definition, and pose sequencing for consistent outputs.

Four submissions to Apple. In-app purchases, social feed policy compliance, U.S. and U.K. business authorization.

Architecture plans, prompt libraries, and workflow specs used by both developer and LLMs to maintain direction.

Avatar grid preprocessing, aspect ratio normalization, and color extraction to preserve subject resemblance.

Want the full story?

Read Case Study →