The default

Classify. Summarize. Extract.

Most of what I ask an LLM to do isn't reasoning. It's classify, summarize, extract. Tag this transcript. Pull out the three key points. Decide if this email is personal or business. These are volume tasks — I run them thousands of times in a pipeline, and the cost adds up fast when every call goes to an API.

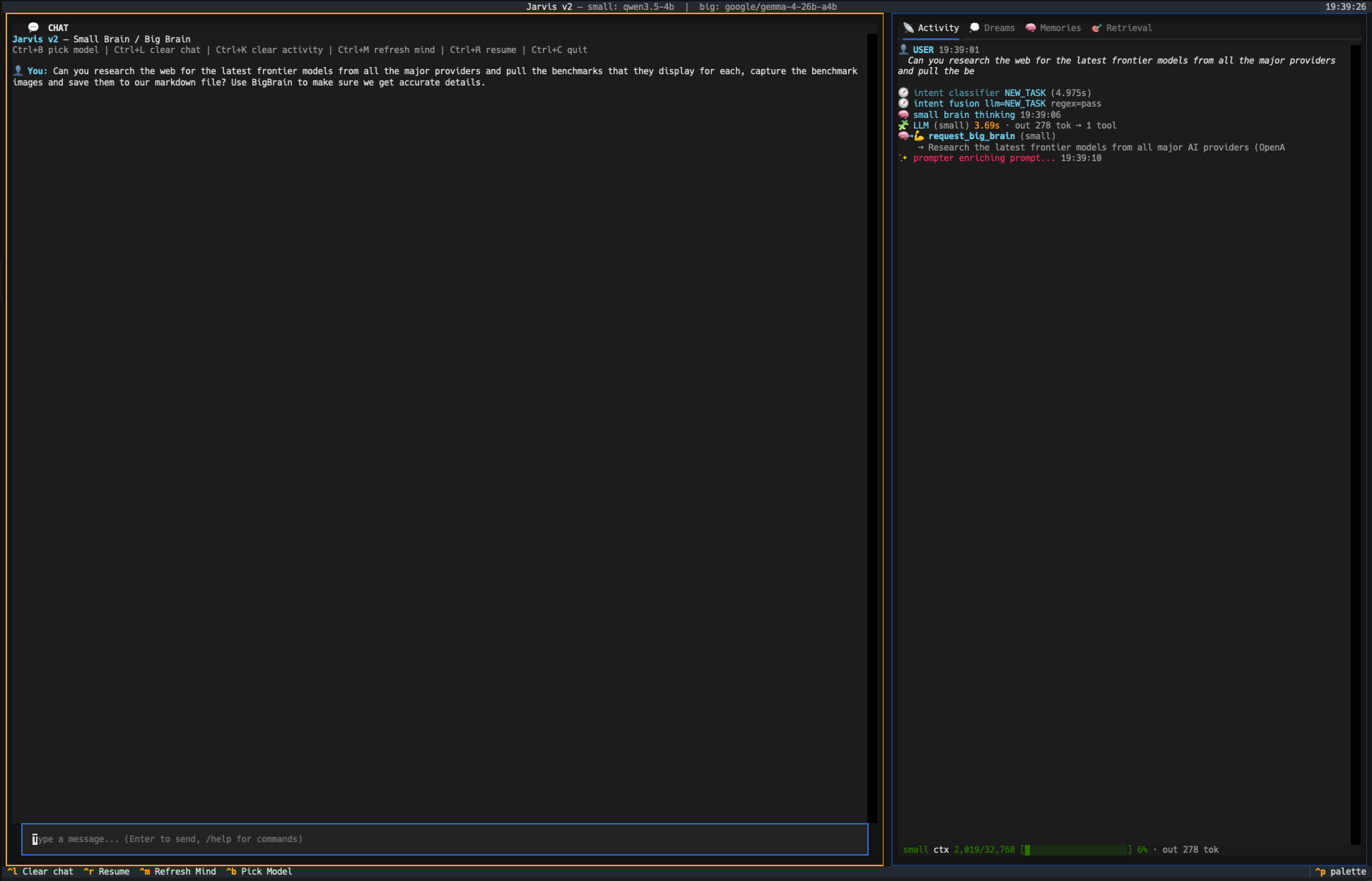

So I stopped sending them out. LM Studio runs on my laptop. A small model — Qwen 2B, Gemma 2B, something in that weight class — handles this kind of bulk work fine. Latency is lower because there's no network round-trip. Cost is zero. And when the power goes out or the API rate-limits me, the pipeline keeps running.

A local model does the grunt work on every keystroke. Only the hard requests escalate.

If the task is repetitive and the structure is fixed, a small local model will do it cheaper, faster, and just as well.

— A rule I've stopped questioning

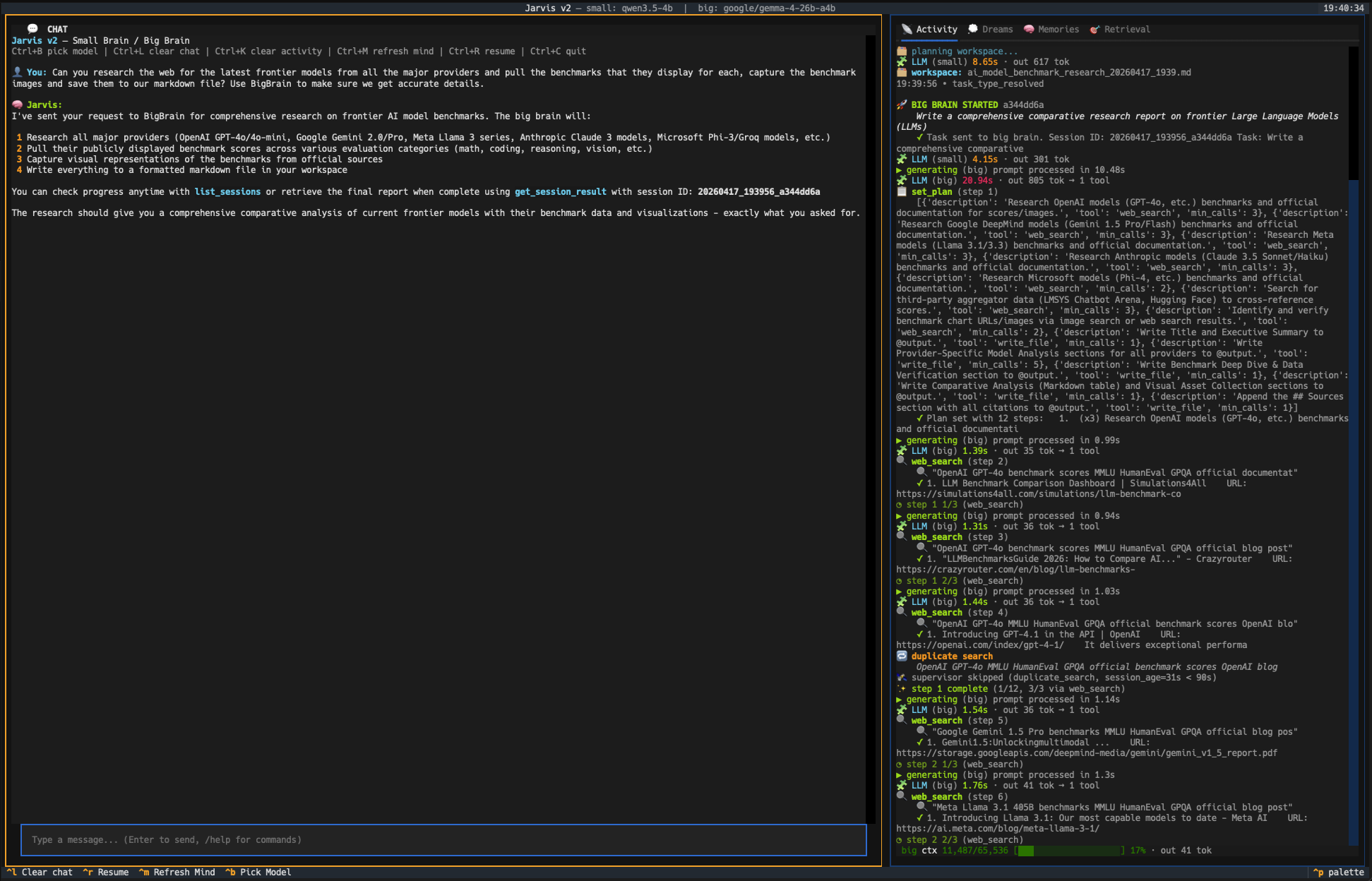

Where cloud still wins

Reserve the big models for the hard parts.

The cloud models earn their keep on deep synthesis. Planning a multi-step workflow. Debugging a gnarly failure. Writing a case study from interview transcripts. These are the places where a frontier model actually thinks differently — not just faster, but better. That's where I reach for Claude or GPT, because the work genuinely benefits from the extra horsepower.

Think of it like a team. The local model is the intern who handles intake. It reads everything, files it, flags what matters. The cloud model is the senior hire who only gets the hard problems.

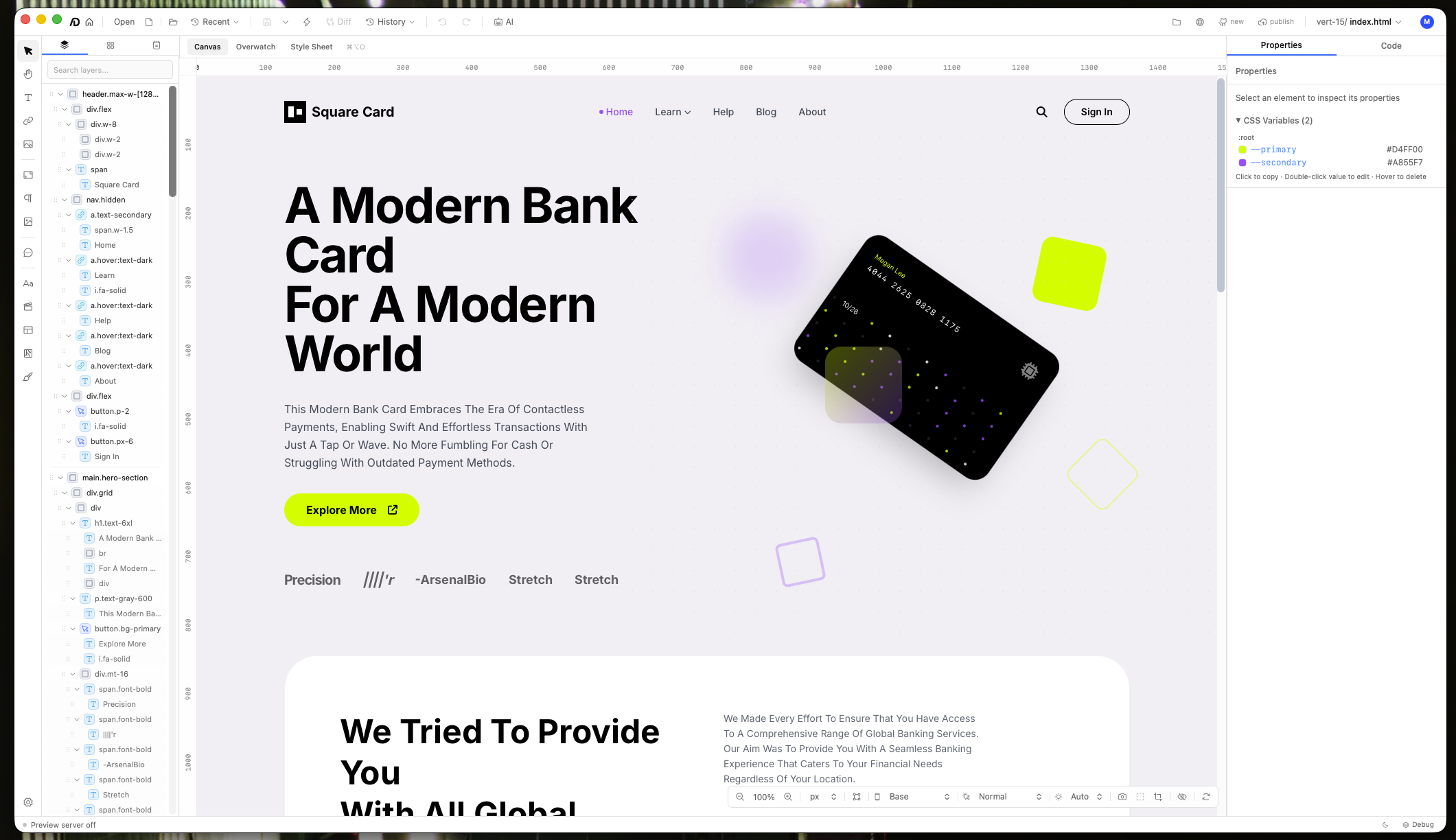

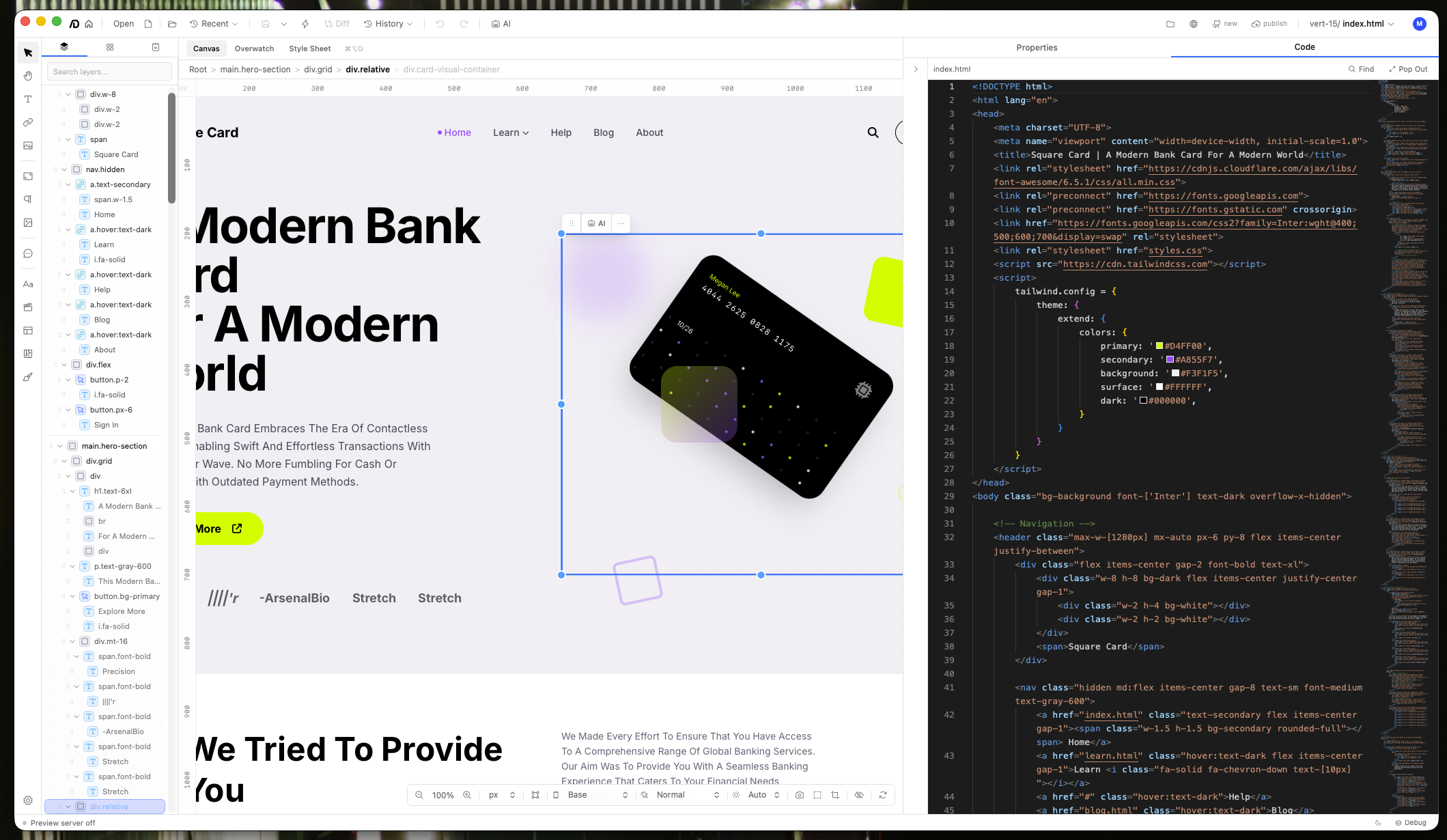

Three phases, three model tiers. The router decides which brain gets the work.

The practical setup

How it routes in practice.

My agents default to the local endpoint first. If the task fits a schema — classify, extract, summarize — it stays local. If the router detects something open-ended (multi-step planning, ambiguous instructions, creative writing), it escalates to Claude or GPT.

The beautiful part: this split is invisible to the user. I just talk to the assistant. It figures out which brain does the work.

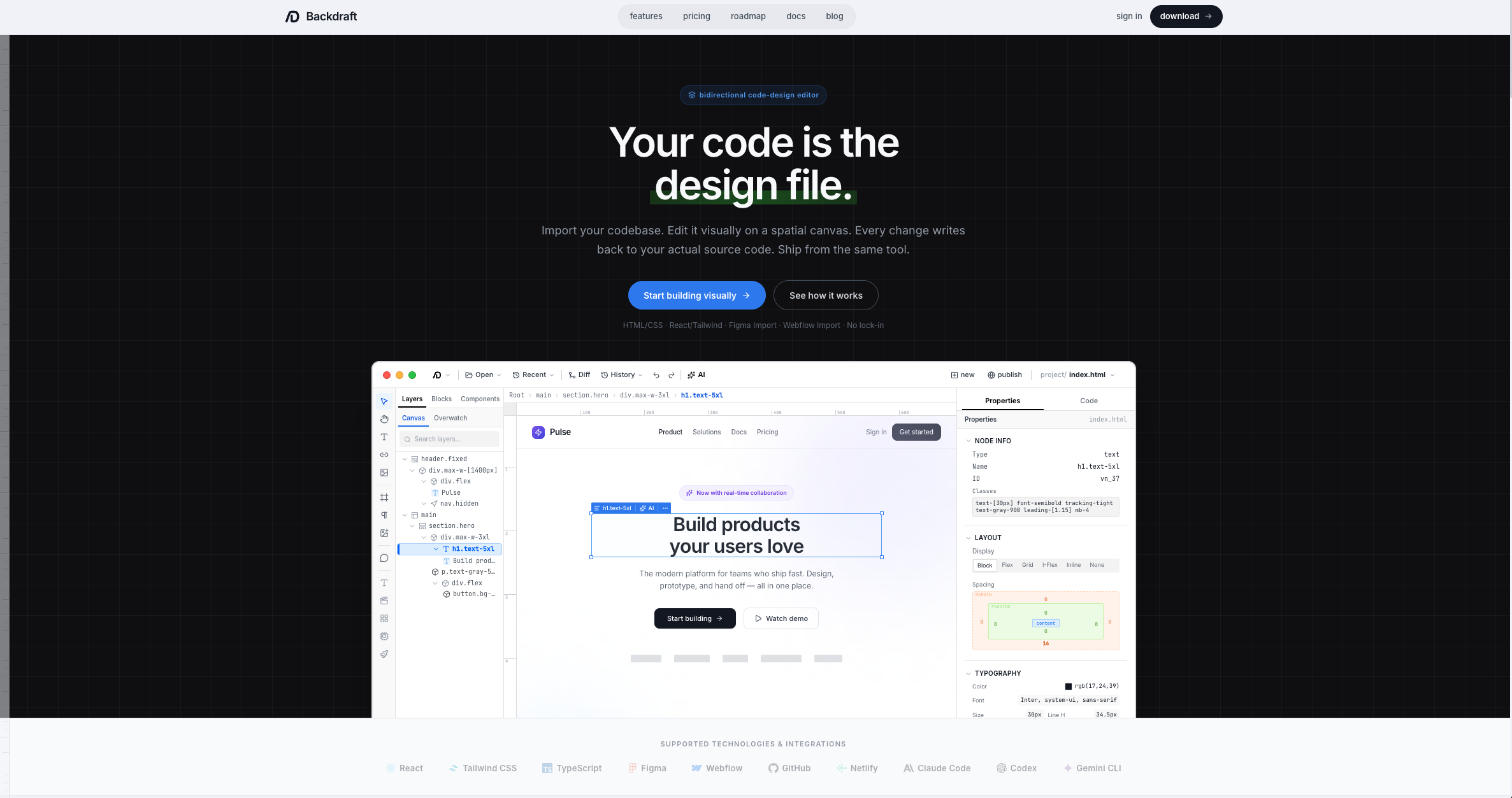

Physical tools on the desk.

Digital tools on the laptop.

Same principle for hardware — right tool for the task, not the most expensive one.

Share this post

Keep reading

More worth your time

Blog post · AI, Building, Process

The bottom-up edit rule

When a model queues five edits against one file, working top-down is a bug. Here's the order that fixed it.

Read post →

Blog post · AI, Building, Process

The 12,000-token message I didn't know I was sending

My agent's context window kept jumping from 22% to 60% in a single turn. The leak wasn't where I was looking.

Read post →Stay in the loop

New posts, same voice.

Get a short email when I publish something new. No weekly digests, no link dumps — just the essays.