Blog · AI, Building, vision-models

Mosaic: a pre-focus layer for local vision models

Every modern vision model already chunks images to understand them. Local models need that chunking made explicit, because they can't paper over a missed detail the way a frontier model can.

Opening

Image models already chunk. Mosaic just does it out loud.

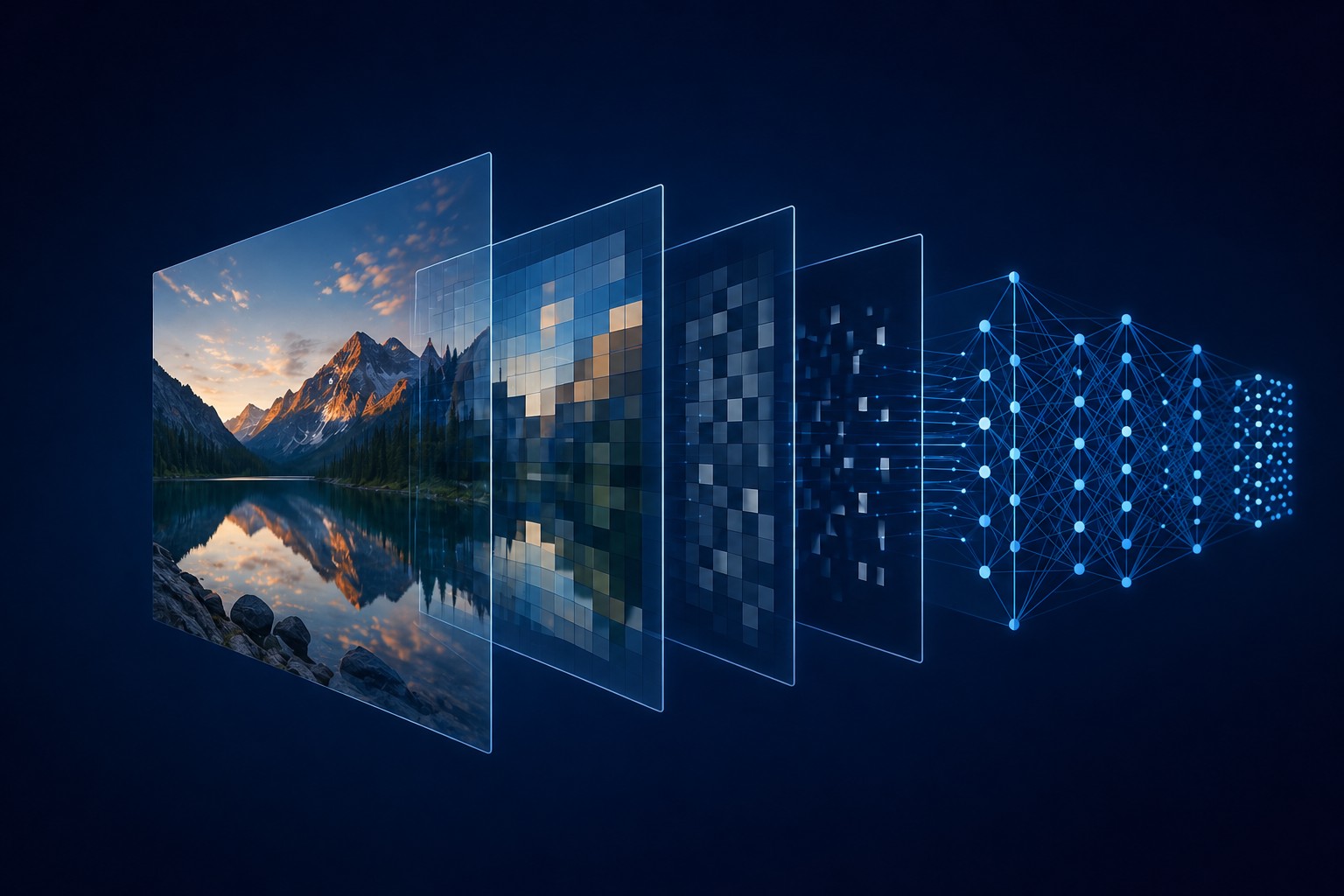

Vision transformers don't read images. They read a list of patches. A 224×224 image becomes a grid of squares, each one linearly embedded as a token, fed to the same kind of transformer that reads text. ViT proved this in 2020, and every vision-language model since has done a version of it.

What changed is the resolution. Real photos aren't 224×224. So LLaVA-NeXT splits a high-resolution image into a grid of sub-images before any of them reach the encoder. Qwen2-VL packs arbitrary resolution into one sequence with a 2D positional scheme. Pixtral processes images at native resolution with literal row separators between tiles.

The consensus across modern VLMs is the same. To see the picture, see it in pieces.

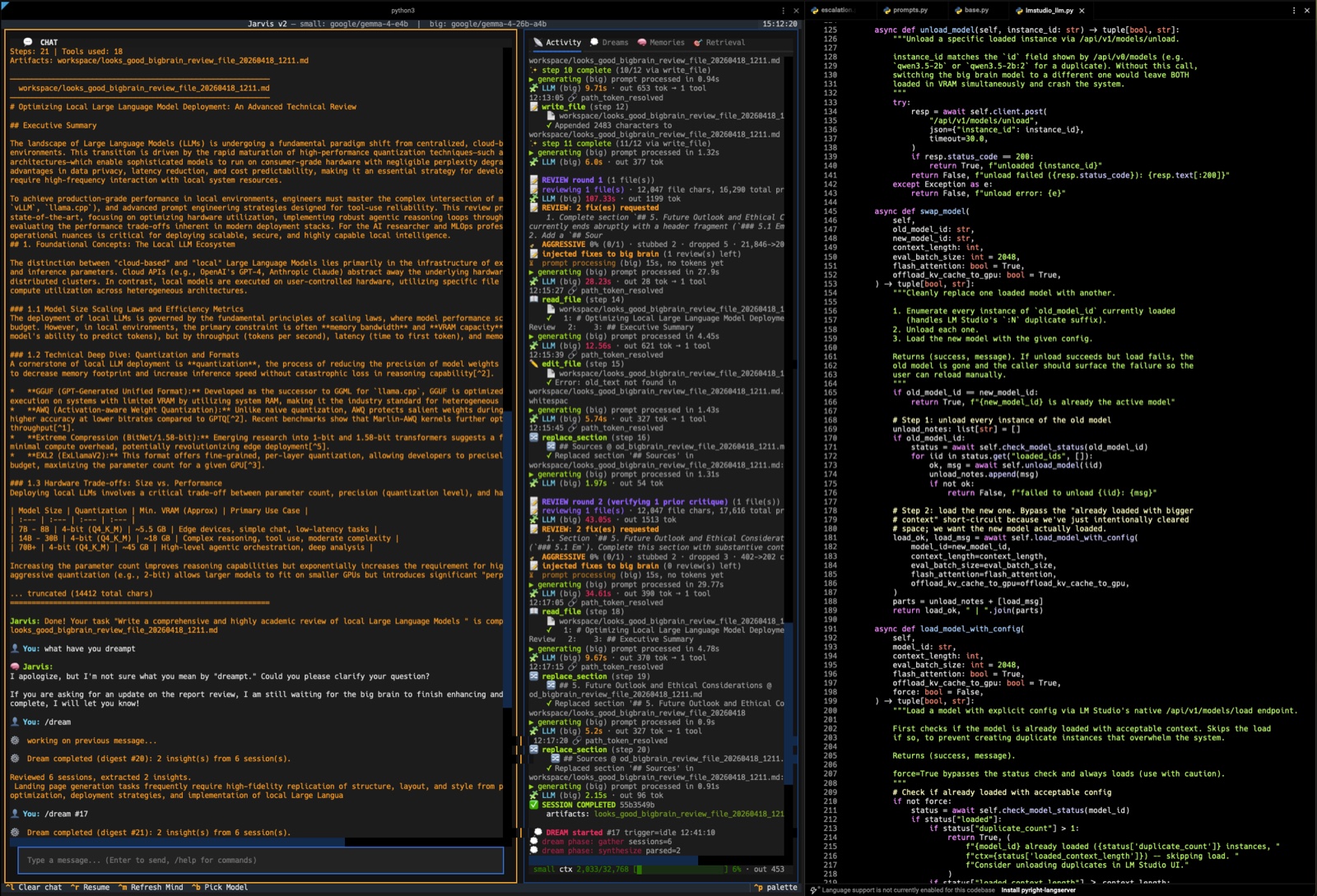

Mosaic is a small CLI I wrote that does the chunking one layer above the model. Before the image reaches the encoder at all, Mosaic splits it into a grid, walks each tile alongside its neighbors, and writes the final review with the whole picture back in view. A pre-focus layer for the model underneath.

The point isn't novelty. The point is that local models, the kind I run on a laptop, can't paper over what they miss the way a frontier model can.

One full pass on the Tokyo drone shot at light density (4×2 grid, 8 tiles). The first two tiles run at real pace; the rest cycle through the same focal-plus-neighbor pattern rapidly so the full flow lands in under thirty seconds.

Inside the encoder

Modern VLMs split before they understand.

ViT-B/16 takes a 224×224 image and chops it into 196 patches plus one CLS token. ViT-L/14 cuts the same image into 256 patches. The patch grid is the model's first move every time, and the rest of the network operates on that grid as a sequence of tokens.

Once people started training these models on real, high-resolution photos, the 224×224 input became a bottleneck. The fix wasn't to make the encoder bigger. It was to chunk the input image before the encoder.

LLaVA-NeXT's AnyRes describes this directly:

AnyRes naturally represents a high-resolution image into multiple images that a pre-trained ViT is able to digest, and forms them into a concatenated sequence.

Grid configurations include 2×2, 1×4, and 4×1, supporting inputs up to 672×672 or 336×1344. Every sub-image runs through the same ViT, then the token sequences get concatenated.

Qwen2-VL takes a different angle. Its "Naive Dynamic Resolution" removes the absolute position embeddings from the ViT, adds 2D RoPE, and packs variable-resolution images into one sequence. An MLP after the ViT compresses adjacent 2×2 token blocks 4-to-1, so the smallest visual unit the model addresses is roughly 28×28 pixels. The encoder still tiles. It just hides the seams better.

Pixtral, Molmo, LLaVA-UHD, every recent open VLM I've looked at does some version of this. The architecture-level pattern is settled. Tile the image, encode each piece, glue the pieces back together with positional information, and let attention sort it out from there.

Diagram by [Cosmia Nebula](https://commons.wikimedia.org/wiki/File:Vision_Transformer.svg), after Zhang, Lipton, Li & Smola's _Dive into Deep Learning_, via Wikimedia Commons. CC BY-SA 4.0.

Where local breaks down

Local models can't paper over the chunking.

If chunking is universal, why surface it at the application layer?

Because the bigger the model, the more invisibly the chunking works. A frontier-grade VLM with a long context and a deep encoder can absorb a high-resolution image, hold every tile's embedding in attention, and stitch a coherent answer out of the whole sequence. You drop a phone photo into ChatGPT and you don't think about how the patches got there.

Local models aren't there yet. Two specific limits matter.

Attention budget per call. A 4B model splitting its attention across hundreds of image tokens and a long instruction starts losing fidelity on small text and dense regions. The model still produces a confident answer. It just papers over the parts it can't read clearly, or hallucinates them outright.

Context rot in the middle. "Lost in the Middle" shows the U-shaped accuracy curve over position in context. In their multi-document QA test, accuracy stays around 75% when the answer is at position 1, recovers to about 72% at position 20, but drops to roughly 55% at position 10. Around a 20-point degradation in the middle, and the effect persists in models marketed as long-context. The same dynamic applies to images packed into a long sequence. The middle tiles are the easiest for the model to skim past.

Frontier models also feel this. They just have enough capacity left over to compensate. A small local model doesn't.

Mosaic forces the model to look at every tile in isolation, then again paired with each of its neighbors. Each call is short. Each call has the focal tile near the front of the prompt. Each call gives the model minimal context to lose track of.

The pipeline

Read the whole thing. Then the parts. Then the whole thing again.

Mosaic runs in three stages.

Stage 1, orientation. The model sees the full image at 1280px max and writes a short summary: scene type, layout, regions worth inspecting closely. This becomes a text prefix passed to every subsequent call so the model knows where each tile sits in the larger picture.

Stage 2, per-tile inspection. The image is split into a grid (2×2, 3×3, 4×4, or 5×5 depending on density). For each tile, the model is called once with the tile alone, then once more with the tile paired with each of its neighbors. A corner tile gets two paired calls. A center tile gets four. A small text-only synthesis call merges the per-tile observations into one tile report.

Stage 3, synthesis. The model sees the full image again, plus every tile report from stage 2, and writes the final review.

The paired-neighbor calls turn out to matter. A tile alone tells you what's in that crop. A tile paired with the tile to its left tells you what continues across the boundary. That's how you catch a sign whose text spans two tiles, or a shop name written across an awning that runs from one column into the next.

Same image, four model sizes

Nobody read the surname. The bigger models got closer.

I ran the drone photo through Qwen 3.5 2B, Gemma 4 7.5B, Gemma 4 26B-A4B, and Qwen 3.6 35B-A3B at light density (4×2 grid, 8 tiles, 38 calls per run). Same prompt, same pipeline, same image. Total runtime ranged from 1.5 minutes on the 2B up to 9.6 on the 35B.

Then I went back to the source photo and read the signage myself, character by character. The vertical wooden sign on the left building reads 並木 (Namiki). The bright white sign upper right reads roughly 質 / 買取 / 楠本 with a phone number, looks like a pawn shop named Kusumoto. There's no Shibuya Station signage anywhere in the frame, no "Kinbi," no "Konbee."

Each model's reading against the ground truth:

- Qwen 2B at quick density read

並. The first kanji of並木. An honest partial. - Qwen 2B at light density wrote "KONBEE," "KINBI," and "Shibuya Station South Exit." None of those are in the image. Higher density spread its attention thin and the model started inventing.

- Gemma 7.5B at light read

買取(correct) and surnamed the pawn shop橋本(Hashimoto). Wrong kanji entirely. Different radicals. - Gemma 26B-A4B at light wrote

桶本(Okemoto). Wrong, but the second kanji本matches the actual sign. - Qwen 35B-A3B at light wrote "Kusunoki" (

楠木). The first kanji楠is correct. The second is a different character in the same family. The closest reading any model produced.

The honest pattern: no local model exactly read the surname on that sign. The bigger models hovered closer. The smallest model went straight to invention at higher density.

Why partial wins still matter

Close but wrong is still useful.

If no model nailed the surname, was this a failure?

No. Two reasons.

A one-shot pass would have produced nothing. I tested it. Drop the same image into the same models with no chunking, just "describe everything in this image," and the surname doesn't appear at all. The chunking elevated 2B from "there is text on a sign" to 並. It elevated 35B from generic "Japanese signage" to 楠木. Half a reading is a real lead. Zero is not.

Cross-model disagreement is signal. Three models gave three different readings of the same sign. That disagreement tells you the sign is hard. The image is dim. The text is small. The camera angle distorts the strokes. A downstream pipeline (search, human review, a second opinion from a different model family) has somewhere to focus instead of nowhere.

The right framing isn't "local models can OCR Japanese signage." It's "local models converge on enough ground truth that downstream verification becomes tractable."

Density

Match density to model size, or the small ones start inventing.

The right density depends on the model.

2×2 quick for sub-3B models. Four tiles is enough to surface coarse detail without overloading attention. The model stays grounded. This is the right floor for any model that hasn't been trained specifically to handle dense visual grids.

3×3 light for the 7-10B class. Eight to nine tiles. Enough to catch street-level signage on a typical photo, while still keeping each call's attention focused.

4×4 dense for 20B and up. Sixteen tiles surfaces fine-grained text, license plates, the kind of detail that needs sustained attention to extract. Don't run a 4B at this density. It will confidently invent.

What's next

Phase-dependent prompts: zoom, then ask one thing at a time.

The 35B's near-miss on 楠 points at the next axis worth tuning. The model wasn't asked to read text. It was asked to inspect the tile. The text reading came out as a side effect of "describe what's here."

Give that same model a tile and ask it just for text. Then a separate call asking just for the people in the tile. Then a separate call for lighting and any defects. Each call has fewer things to attend to, fewer distractors, fewer ways to drift.

This is the same insight that made tiling work in the first place, applied to the prompt instead of the image. Mosaic already splits the image. The prompt still asks for everything at once. The next version splits the prompt too.

A phase-dependent pass on the same tile might run like this:

- Text pass. "Transcribe any text visible in this tile. Romanize and translate non-Latin scripts. If a character is partial or ambiguous, mark it as unclear instead of guessing."

- Subjects pass. "List the objects, people, vehicles, and architectural elements visible in this tile."

- Characteristics pass. "Describe lighting, color, focus, weather, time of day, and any image defects."

Three calls instead of one per tile, each with the model's attention narrowed to a single dimension. The synthesis step combines them the same way it combines tile reports today.

More time. Less drift per call. For a small model running locally, that's the right trade.

Speed isn't the goal. Quality at local scale is.

Closing

If big models need to chunk, small ones need it more.

The chunking has always been there. Inside ViT, inside LLaVA-NeXT, inside Qwen2-VL. Modern vision models see the image in pieces because seeing the whole thing at once has never worked.

Frontier models hide that fact. They have enough attention surface, enough context, enough training signal to make any prompt feel like one shot. On a 7B running on a laptop, that compensation runs out fast. The image arrives at the encoder, the model splits it into patches, and whatever didn't survive that split is gone.

Mosaic puts the splitting back in the open. It costs more time to run. What it gives back is a small local model meeting the image halfway, with enough ground truth on the table for a downstream pass (a phase-dependent reread, a different model, a human) to take it the rest of the way. That's the quality the local-model use case actually needs.

Share this post

Keep reading

More worth your time

Blog post · AI, Building, Process

Catching the 7,000-character write

When your local model's tool call drops a required parameter and a long file almost gets thrown away.

Read post →

Blog post · AI, Building, Process

The bottom-up edit rule

When a model queues five edits against one file, working top-down is a bug. Here's the order that fixed it.

Read post →Stay in the loop

New posts, same voice.

Get a short email when I publish something new. No weekly digests, no link dumps — just the essays.