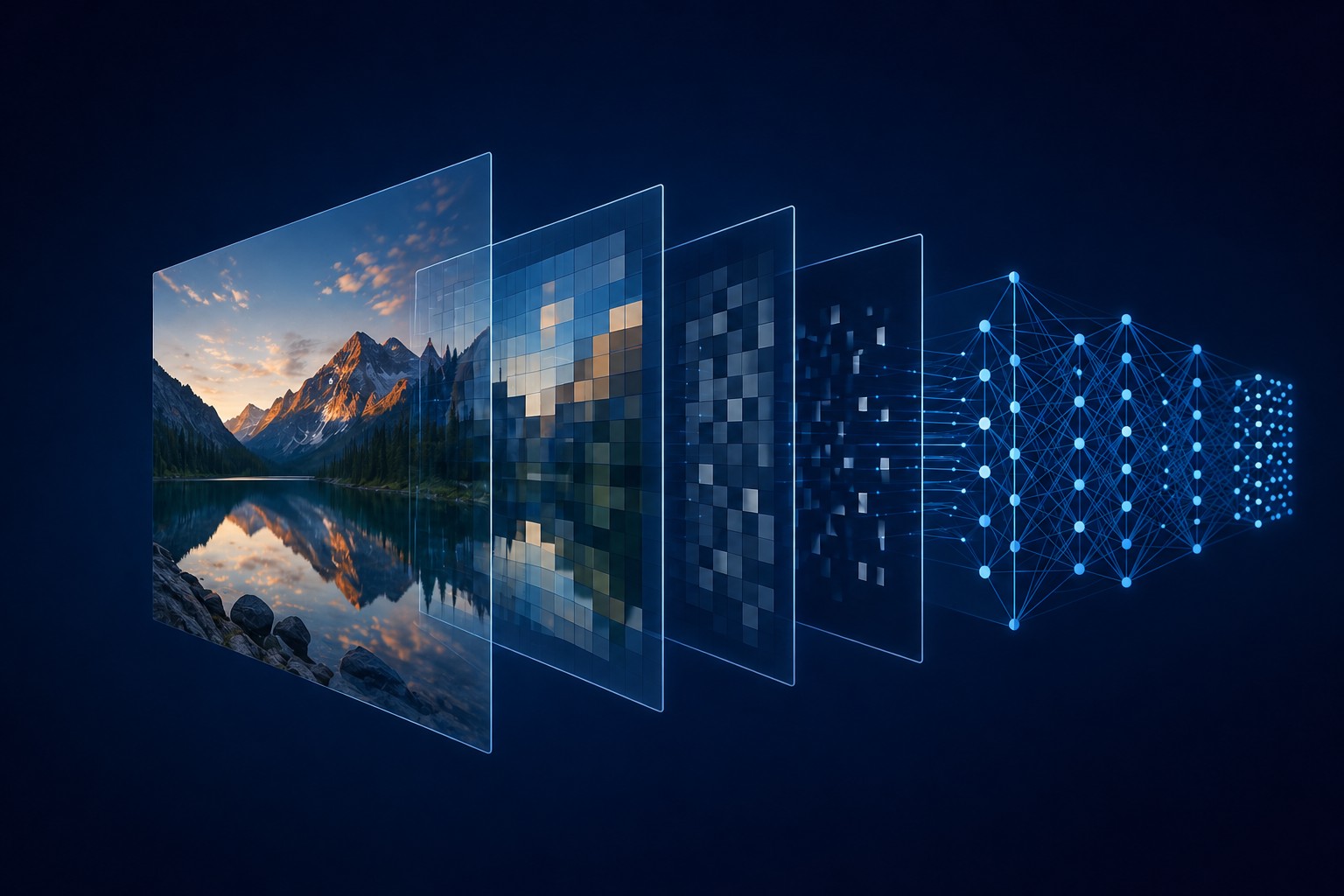

Mosaic: a pre-focus layer for local vision models

Every modern vision model already chunks images to understand them. Local models need that chunking made explicit, because they can't paper over a missed detail the way a frontier model can.

Catching the 7,000-character write

When your local model's tool call drops a required parameter and a long file almost gets thrown away.

The bottom-up edit rule

When a model queues five edits against one file, working top-down is a bug. Here's the order that fixed it.

The 12,000-token message I didn't know I was sending

My agent's context window kept jumping from 22% to 60% in a single turn. The leak wasn't where I was looking.

How I'd set up LM Studio today

A recent Llama.cpp update pushed me from 60 tokens per second to 80-plus on the same machine. Here's what I'd run and what I'd turn on.